I am a graduate student at Computer Science Department, University of Maryland, College Park. My research advisor is Prof. David Jacobs. I have done my undergrad in Electrical Engineering from Indian Institute of Technology . In the winters of 2006, I was touched by the miracles of String Theory and fell in love with Maths. My primary interest lies in mathematical modelling and inference from images and textual data. So far, I have worked in Biometrics with a focus on pose and illumination invariant face recognition using latent space modelling. I have also worked on multi-modal correlation filters for infra-red image understanding and fusion. Recently I have been interested in deep learning; inspired by the great success of Deep Convolutional Neural Networks for large scale object recognition. However, unlike the use of deep convnets as a black-box for object classification, I am more interested in the application of CNNs on novel tasks such as image understanding, semantic feature extraction etc..

CV and Google Scholar

Address - Room 3250, AV Williams

Computer Science Department

University of Maryland, College Park

Phone - 240-476-8060

E-mail -

Address - Room 3250, AV Williams

Computer Science Department

University of Maryland, College Park

Phone - 240-476-8060

E-mail - ![]() (more frequently used)

(more frequently used)

A. Deep Learning

Recently, deep learning based methods have shown significantly improved performances on both visual and textual understanding tasks. I have pursued the use of deep learning for visual and textual understanding tasks, but instead of using them as black-box classifier I am more interested in exploring them for novel tasks such as semantic scene segmentation, text composition, cross-domain transfer etc..

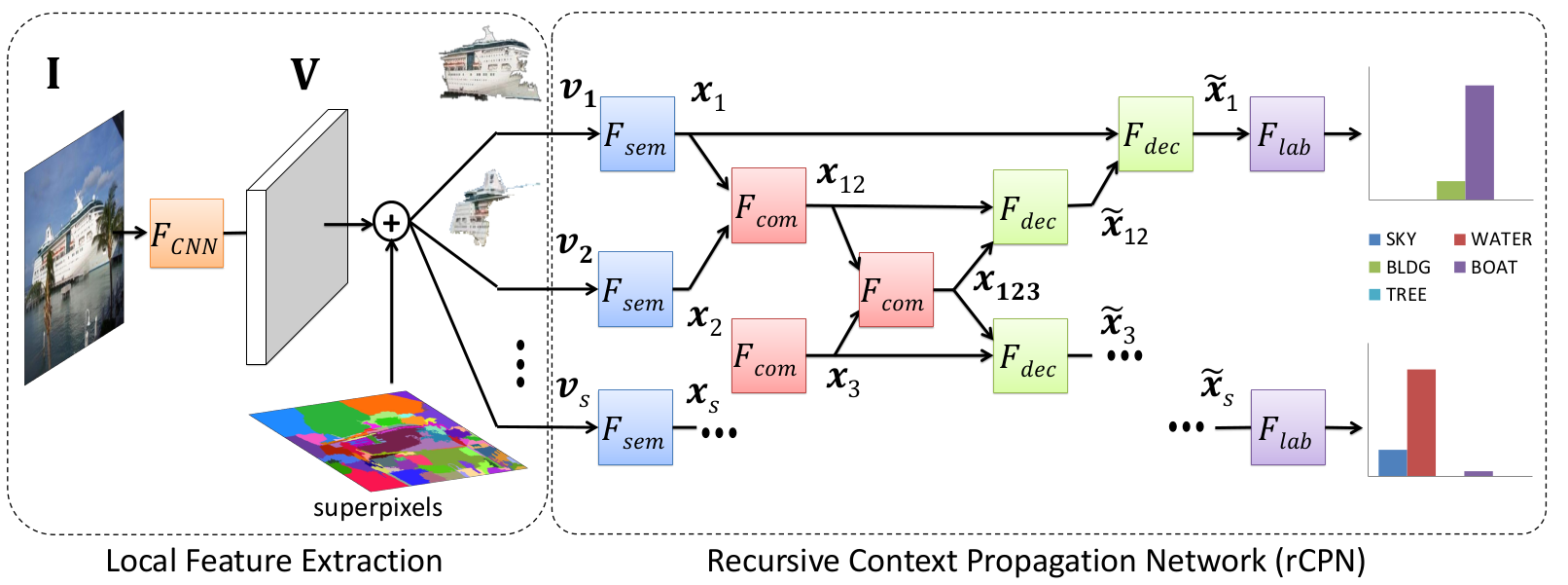

Abhishek Sharma, Oncel Tuzel, Ming Yu Liu: Recursive Context Propagation Network for Scene Labeling. NIPS 2014. [PDF]

|

We propose a deep feed-forward neural network architecture for pixel-wise semantic scene labeling. It uses a novel recursive neural network architecture for context propagation, referred to as rCPN. It first maps the local visual features into a semantic space followed by a bottom-up aggregation of local information into a global representation of the entire image. Then a top-down propagation of the aggregated information takes place that enhances the contextual information of each local feature. Therefore, the information from every location in the image is propagated to every other location. Experimental results on Stanford background and SIFT Flow datasets show that the proposed method outperforms previous approaches. It is also orders of magnitude faster than previous methods and takes only 0.07 seconds on a GPU for pixel-wise labeling of a 256x256 image starting from raw RGB pixel values, given the super-pixel mask that takes an additional 0.3 seconds using an off-the-shelf implementation. |

|---|

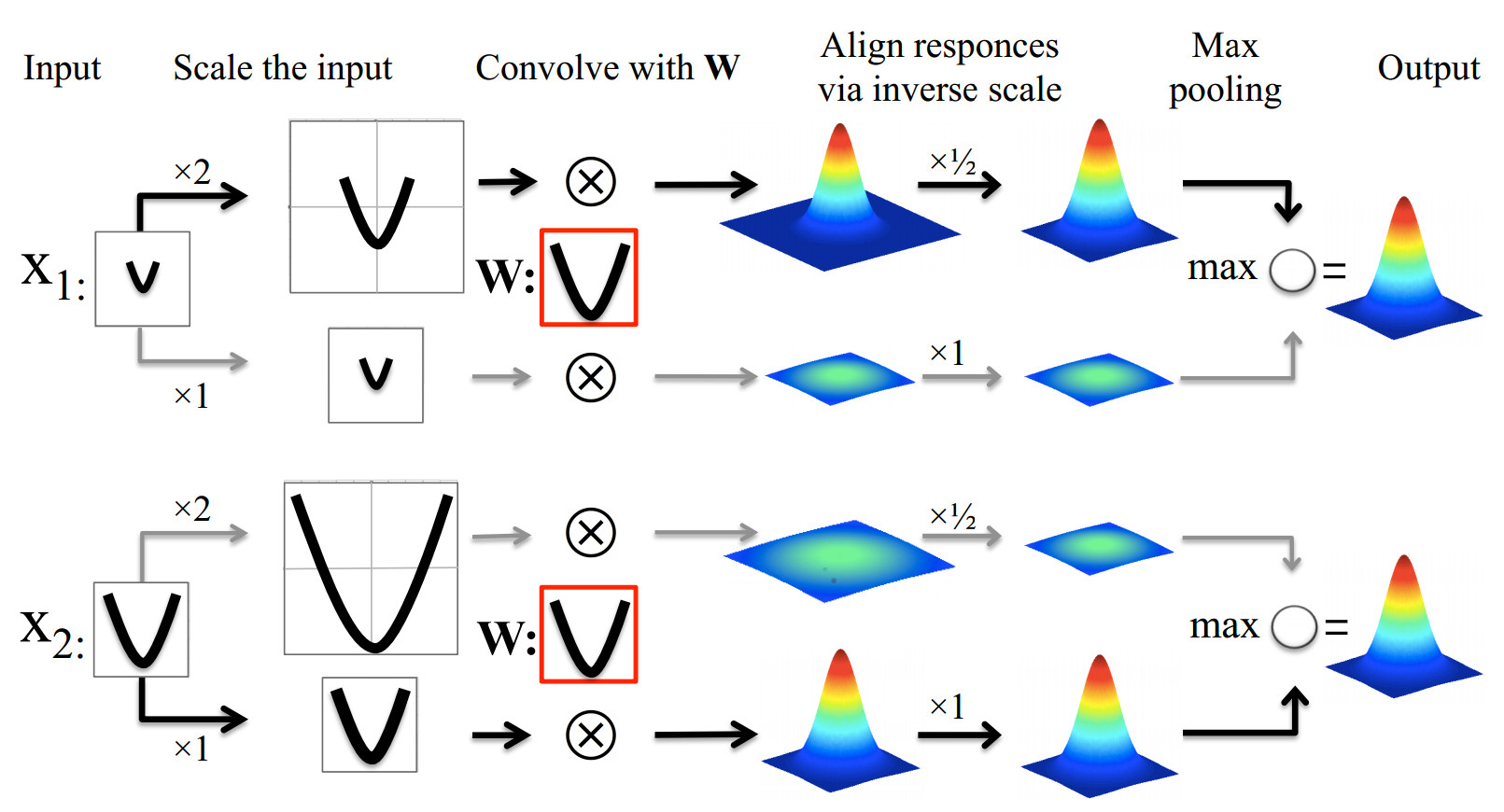

Angjoo Kanazawa, Abhishek Sharma, David W. Jacobs: Locally Scale-invariant Convolutional Neural Network. Deep Learning Workshop NIPS 2014. [PDF]

|

Convolutional Neural Networks (ConvNets) have shown excellent results on many visual classification tasks. With the exception of ImageNet, these datasets are carefully crafted such that objects are well-aligned at similar scales. Naturally, the feature learning problem gets more challenging as the amount of variation in the data increases, as the models have to learn to be invariant to certain changes in appearance. Recent results on the ImageNet dataset show that given enough data, ConvNets can learn such invariances producing very discriminative features [1]. But could we do more: use less parameters, less data, learn more discriminative features, if certain invariances were built into the learning process? In this paper we present a simple model that allows ConvNets to learn features in a locally scale-invariant manner without increasing the number of model parameters. We show on a modified MNIST dataset that when faced with scale variation, building in scale-invariance allows ConvNets to learn more discriminative features with reduced chances of over-fitting. |

|---|

Abhishek Sharma, Oncel Tuzel, David W. Jacobs: Deep Hierarchical Parsing for Semantic Segmentation. submitted to IEEE CVPR 2015. [PDF coming soon]

|

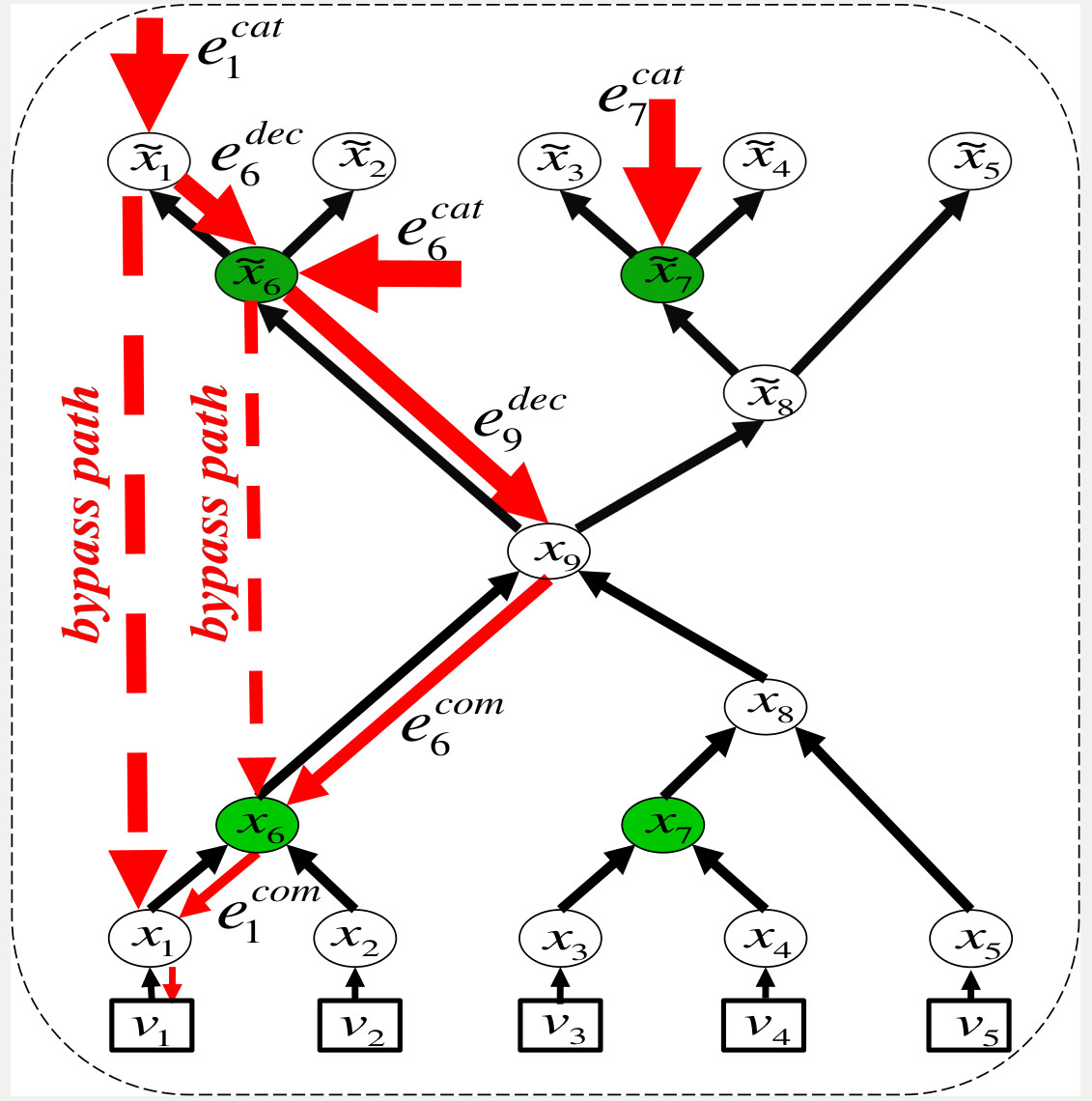

This paper proposes a learning-based approach to scene parsing inspired by the deep Recursive Context Propagation Network (RCPN). RCPN is a deep feed-forward neural network that utilizes the contextual information from the entire image, through bottom-up followed by top-down context propagation via random binary parse trees. This improves the feature representation of every super-pixel in the image for better classification into semantic categories. We analyze RCPN and propose two novel contributions to further improve the model. We first analyze the learning of RCPN parameters and discover the presence of bypass error paths in the computation graph of RCPN that can hinder contextual propagation. We propose to tackle this problem by including the classification loss of the internal nodes of the random parse trees in the original RCPN loss function. Secondly, we use an MRF on the parse tree nodes to model the hierarchical dependency present in the output. Both modifications provide performance boosts over the original RCPN and the new system achieves state-of-the-art performance on Stanford Background, SIFT-Flow and Daimler urban datasets. |

|---|

B. Latent Space Models for Multi-view learning

Data often arrives in different forms/views/modalities with similar or complementary information. It's a challenge to retrieve or classify samples in different view using a model trained on some other view or combine complementary information from different views. It is because different views span different feature spaces and there is no natural correspondence betweent the representations that can be utilized for aforementioned tasks. A natural and intuitive way to tackle these problems is to utilize a generative model from a common latent space to the observed samples spaces and pool the information from multiple views in the common latent space. The advantage of a commom latent space is evident for cross-view classification and retrieval in that all different view samples can be first mapped to the common latent space prior to classification and retrieval. I have pursued the idea of latent space representation for multi-view learning problems and came up with some interesting solutions to commonly occuring multi-view problems such as - Pose and lighting invariant face recognition, text-image retrieval etc..

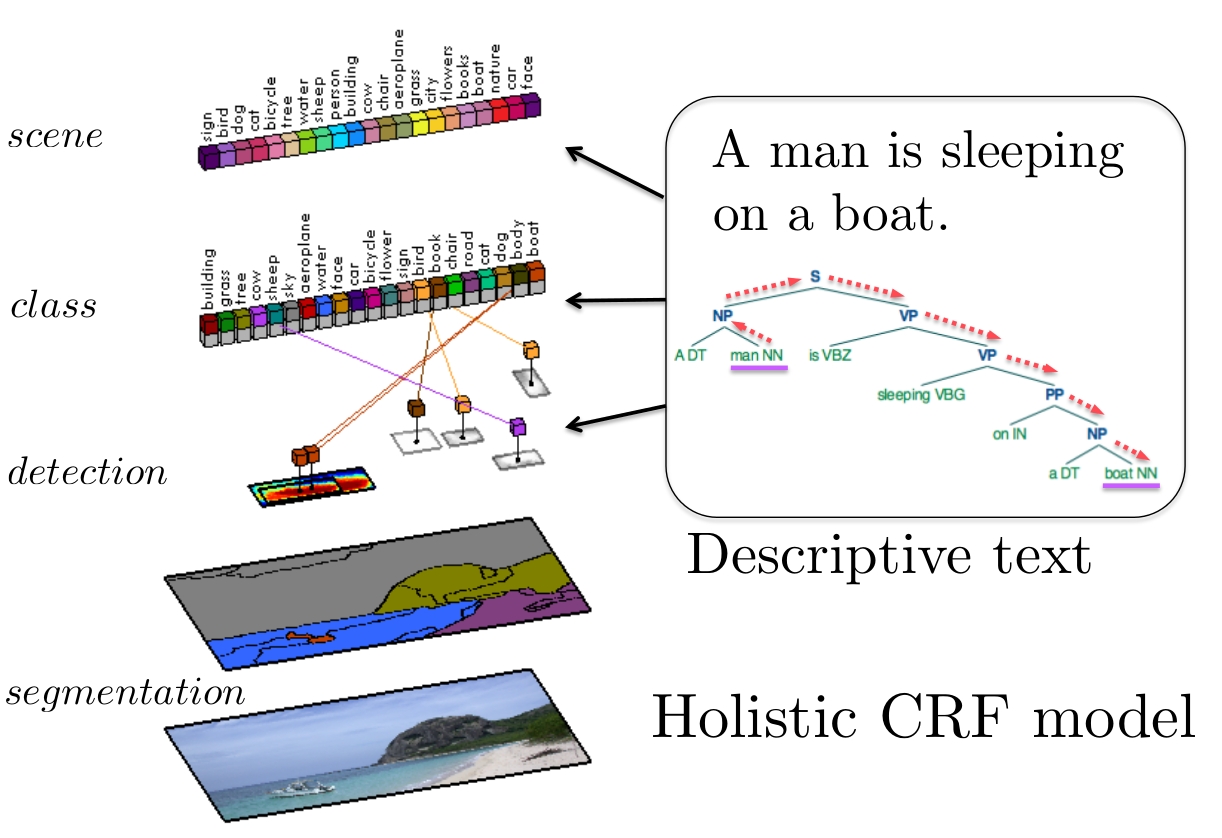

Sanja Fidler, Abhishek Sharma, Raquel Urtasun: A sentence is worth thousands of pixels. CVPR 2013. [PDF]

|

We are interested in holistic scene understanding where images are accompanied with text in the form of complex sentential descriptions. We propose a holistic conditional random field model for semantic parsing which reasons jointly about which objects are present in the scene, their spatial extent as well as semantic segmentation, and employs text as well as image information as input. We automatically parse the sentences and extract objects and their relationships, and incorporate them into the model, both via potentials as well as by re-ranking candidate detections. We demonstrate the effectiveness of our approach in the challenging UIUC sentences dataset and show segmentation improvements of 12.5% over the visual only model and detection improvements of 5% AP over deformable part-based models. |

|---|

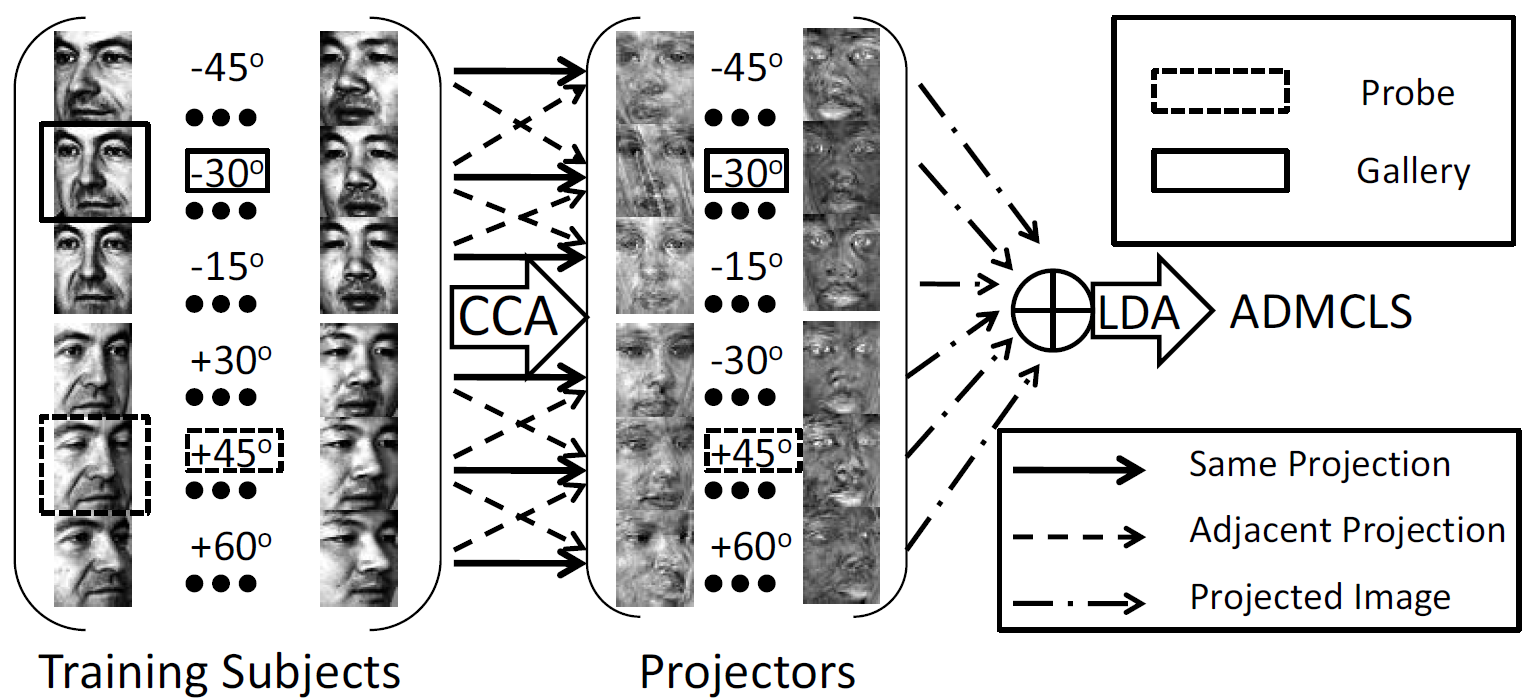

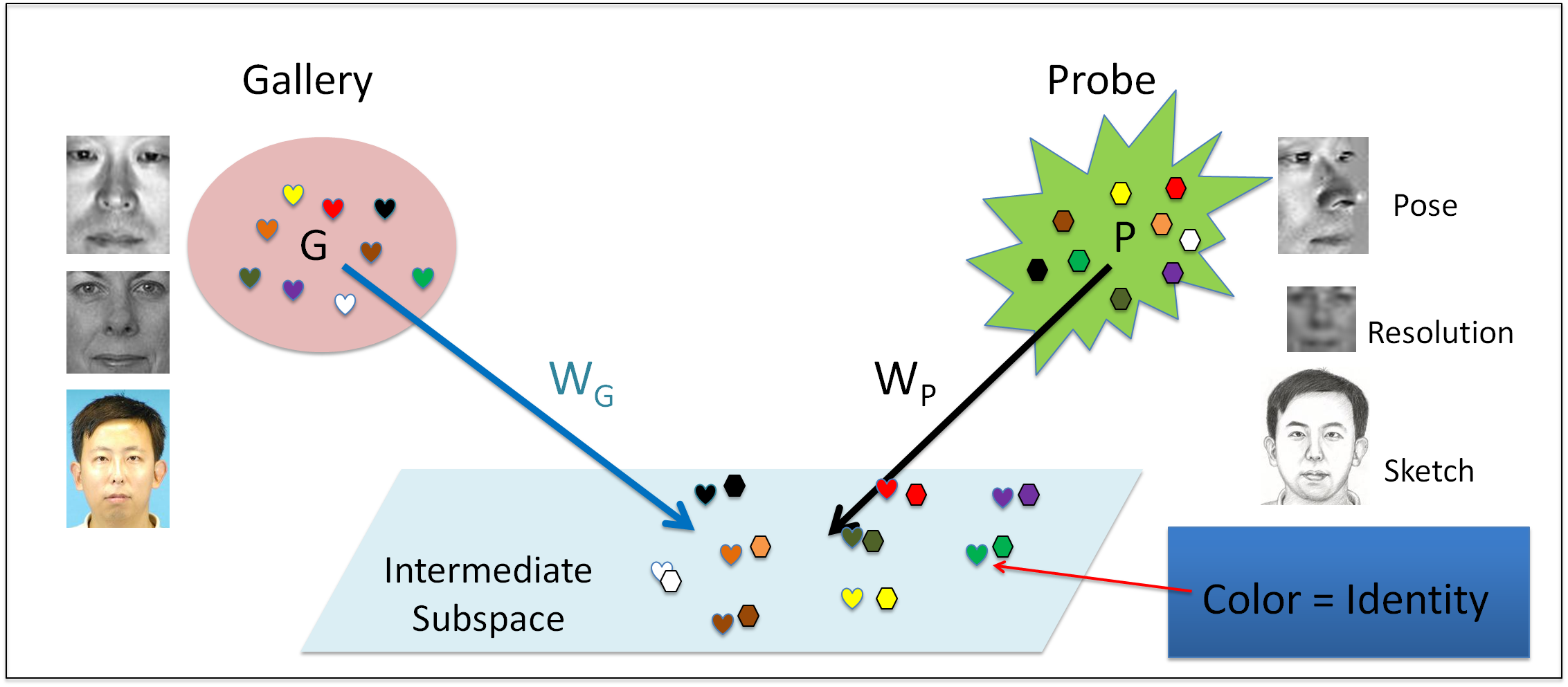

Abhishek Sharma, Murad Al haj, Jonghyun Choi, Larry S Davis and David W Jacobs : Robust Pose Invariant Face Recognition using Coupled Latent Space Discriminant Analysis. CVIU, Volume 116, Issue 11, Pages 1095-1110 (November 2012) . [PDF]

[FERET_MultiPIE_fiducials]  |

We propose a novel pose-invariant face recognition approach which we call Dis- criminant Multiple Coupled Latent Subspace framework. It finds sets of projection directions for different poses such that the projected images of the same subject are maximally correlated in the latent space. Discriminant analysis with artificially simulated pose errors in the latent space makes it robust to small pose errors caused due to a subject’s incorrect pose estimation. We do a comparative analysis of three popular learning approaches: Partial Least Squares (PLS), Bilinear Model (BLM) and Canonical Correlational Analysis (CCA) in the proposed coupled latent subspace framework. We also show that using more than two poses simultaneously with CCA results in better performance. We report state-of-the-art results for pose-invariant face recognition on CMU PIE and FERET and comparable results on MultiPIE when using only 4 fiducial points and intensity features. |

|---|

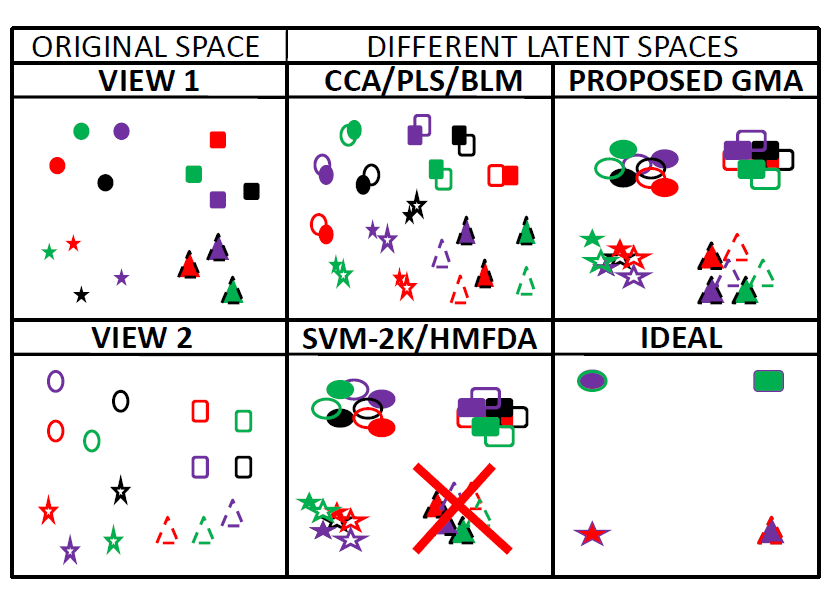

Abhishek Sharma, Abhishek Kumar , Hal Daume III , David W Jacobs : Generalized Multiview Analysis: A Discriminative latent space. CVPR 2012. [PDF] [Poster] [Code]

|

This paper presents a general multi-view feature extraction approach that we call Generalized Multiview Analysis or GMA. GMA has all the desirable properties required for cross-view classification and retrieval: it is supervised, it allows generalization to unseen classes, it is multi-view and kernelizable, it affords an efficient eigenvalue based solution and is applicable to any domain. GMA exploits the fact that most popular supervised and unsupervised feature ex- traction techniques are the solution of a special form of a quadratic constrained quadratic program (QCQP), which can be solved efficiently as a generalized eigenvalue problem. GMA solves a joint, relaxed QCQP over different fea- ture spaces to obtain a single (non)linear subspace. Intuitively, GMA is a supervised extension of Canonical Cor- relational Analysis (CCA), which is useful for cross-view classification and retrieval. The proposed approach is general and has the potential to replace CCA whenever clas- sification or retrieval is the purpose and label information is available. We outperform previous approaches for text- image retrieval on Pascal and Wiki text-image data. We report state-of-the-art results for pose and lighting invariant face recognition on the MultiPIE face dataset, significantly outperforming other approaches. |

|---|

Abhishek Sharma, David W Jacobs : Bypassing Synthesis: PLS for Face Recognition with Pose, Low-Resolution and Sketch. CVPR 2011. [PDF] [Poster] [PLS code]

|

This paper presents a novel way to perform multi-modal face recognition. We use Partial Least Squares (PLS) to linearly map images in different modalities to a common linear subspace in which they are highly correlated. PLS has been previously used effectively for feature selection in face recognition. We show both theoretically and experimentally that PLS can be used effectively across modalities. We also formulate a generic intermediate subspace comparison framework for multi-modal recognition. Surprisingly, we achieve high performance using only pixel intensities as features. We experimentally demonstrate the highest published recognition rates on the pose variations in the PIE data set, and also show that PLS can be used to compare sketches to photos, and to compare images taken at different resolutions. |

|---|

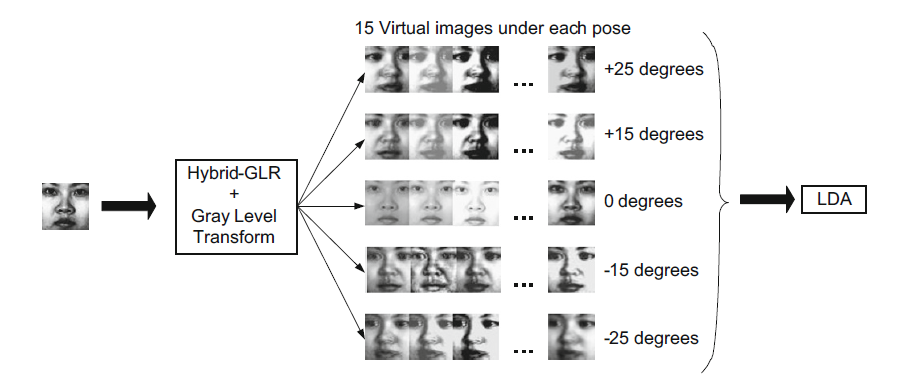

Abhishek Sharma, Anamika Dubey, Pushkar Tripathi, Vinod Kumar : Pose invariant virtual classifiers from single training image using novel hybrid-eigenfaces. Neurocomputing 73 (10-12): 1868-1880 (2010).

|

A novel view-based subspace termed as hybrid-eigenspace is introduced and used to synthesize multiple virtual views of a person under different pose and illumination from a single 2D image. The synthesized virtual views are used as training samples in some subspace classifiers (LDA (Belhumeur et al., 1997) [4], 2D LDA (Kong et al., 2005) [22], 2D CLAFIC (Cevikalp et al., 2009) [23], 2D CLAFIC-μ (Cevikalp et al., 2009) [23], NFL (Pang et al., 2007) [18] and ONFL (Pang et al., 2009) [19]) requiring multiple training image for pose and illumination invariant face recognition. The complete process is termed as virtual classifier and provides efficient solution to the “single sample problem” of aforementioned classifiers. The presented work extends the eigenfaces by introducing hybrid-eigenfaces which are different from the view-based eigenfaces originally proposed by Turk and Pentland (1994) [37]. Hybrid-eigenfaces exhibit properties that are common to faces and eigenfaces. Existence of high correlation between the corresponding hybrid-eigenfaces under different poses (absent in eigenfaces) is one such property. It allows efficient fusion of hybrid-eigenfaces with global linear regression (GLR) (Chai et al., 2007) [36] to synthesize virtual multi-view images which does not require pixel-wise dense correspondence and all the processes are strictly restricted to 2D domain which saves a lot of memory and computation resources. Effectively, PCA and aforementioned subspaces are extended by the presented work and used for more robust face recognition from single training image. Proposed methodology is extensively tested on two databases (FERET and Yale) and the results exhibited significant improvement in terms of tolerance to pose difference and illumination variation between gallery and test images over other 2D methods. |

|---|

Abhishek Sharma, Anamika Dubey, A N Jagannatha, R S Anand : Pose invariant face recognition based on hybrid-global linear regression. Neural Computing and Applications 19 (8): 1227-1235 (2010).

C. Miscellaneous

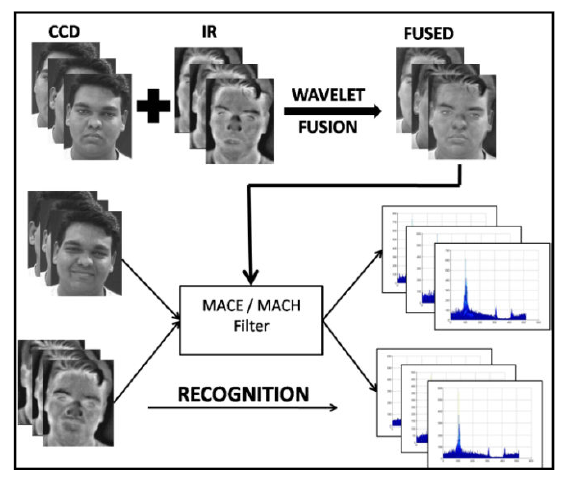

Dubey Anamika, Abhishek Sharma : Multimodal Face Recognition using Hybrid Correlation Filters. Nat. Conf. Computer Vision Pattern Recognition Image Processing & Graphics, 16-17 January 2010, Jaipur, India. PDF

|

This article introduces novel hybrid correlation filter for multimodal face recognition in IR and visible spectrum. In the proposed approach, wavelet based image fusion is used to fuse the face images of a person in visible and IR spectrum. Obtained fused images are then used to synthesize HMACE (Hybrid Minimum Average Correlation Energy) and HMACH (Hybrid Maximum Average Correlation Height) filters. These hybrid correlation filters contained the information captured in both the spectrums so recognition of IR as well as CCD image is possible from the same filter. A direct consequence of this approach is the reduction in storage space by 50%. Thorough experimentation is carried out in which face images of different subjects under different pose, illumination and expression were tested using the proposed filters and the results are encouraging. |

|---|

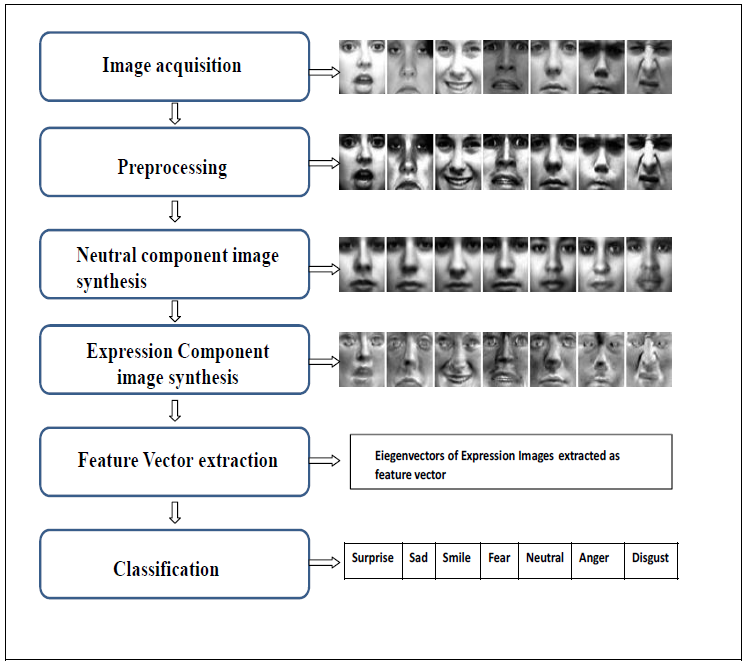

Abhishek Sharma, Dubey Anamika, Facial Expression Recognition using Virtual Neutral Image Synthesis, Nat. Conf. Computer Vision Pattern Recognition Image Processing & Graphics, 16-17 January 2010, Jaipur, India. PDF

|

A novel feature extraction technique for expression recognition is proposed in this article. The proposed method exploits the properties of eigenvector decomposition in extracting the neutral and expression component of an expression containing test image. The methodology synthesizes a virtual neutral image from the given expression image of a person and then uses it obtain the difference image which captures the expression content of the test image. The proposed method eliminates the requirement of a registered neutral image of the same subject whose expression is to be recognized. Thereby, provides a way for application of difference-image methods in expression recognition to any unknown subject. The proposed methodology is extensively tested on Cohn-Kanade expression data set using three different classifiers: FLDA, Multilayer Perceptron and Support Vector Machine. The accuracy obtained by SVM is 92.4% which is comparable to state-of-art methods. Moreover, the required computation has also been decreased because the proposed method does not require the local features for classification. |

|---|

Abhishek Sharma, Hao Shi : Innovative Rated-Resource Peer-to-Peer Network CoRR abs/1003.3326: (2010)

Pushkar Tripathi, Abhishek Sharma, G. N. Pillai, Indira Gupta: Accurate Fault Classification and Section Identification Scheme in TCSC Compensated Transmission Line using SVM. WASET 2011