Chimera is a high-performance computing and visualization cluster that takes advantage of the synergies afforded by coupling central processing units (CPUs), graphics processing units (GPUs), displays, and storage under an infrastructure grant from the National Science Foundation. This project is being led by Amitabh Varshney with participation of 14 other faculty at the University of Maryland. The infrastructure is being used to support a broad program of computing research that revolves around understanding, augmenting, and leveraging the power of heterogeneous vector computing enabled by GPU co-processors. The driving force here is the availability of cheap, powerful, and programmable graphics processing units (GPUs) through their commercialization in interactive 3D graphics applications, including interactive games. The CPU-GPU coupled cluster is enabling the pursuit of several new research directions in computing, as well as a better understanding and fast solutions to several existing interdisciplinary problems through a visualization-assisted computational steering environment.

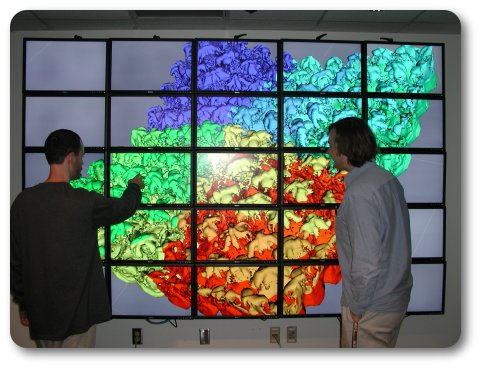

The research groups that are using this cluster fall into several broad interdisciplinary computing areas. We are exploring visualization of large datasets and algorithms for parallel rendering. In high-performance computing we are developing and analyzing efficient algorithms for querying large scientific datasets as well as modeling complex systems when uncertainty is included in models. We are using the cluster for several applications in computational biology, including computational modeling and visualization of proteins, conformational steering in protein structure prediction, and sequence alignment. We are also using the cluster for applications in real-time computer vision, real-time 3D virtual audio, large-scale modeling of neural networks, and high-energy physics. The coupled cluster with a large-area high-resolution display screen is serving as a valuable resource to present, interactively explore, evaluate, and validate the ongoing research in visualization, vision, scientific computing, human-computer interfaces, and computational biology with active participation of graduate as well as undergraduate students.

The cluster consists of the following hardware configuration:

- display wall of 25 Dell 30-inch 2560 x 1600 LCDs

- 16 NVIDIA Tesla S1070 GPUs

- 28 CPU/GPU display nodes

- 36 CPU/GPU compute nodes

- ONStor Network Attached Storage system (NAS)

- Sun StorageTek or DataDirectNet S2A Storage Area Network

- Mellanox Inifiniscale IV QDR (Quad Data Rate) Inifniband

- Traditional 1 Gb Ethernet communication links between nodes

Each display node consists of:

- Two Intel Xeon quad-core 2.5 Ghz processors

- 16GB of RAM

- 2x 250 GB disk

- Nvidia Dual-GPU GTX 295

Each compute node consists of:

- SunFire x4170

- Two Intel Xeon "Nehalem" quad-core 2.8Ghz

- 24GB of RAM

- Two 146GB 10K rpm SAS Drives

Web Accessibility