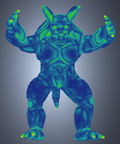

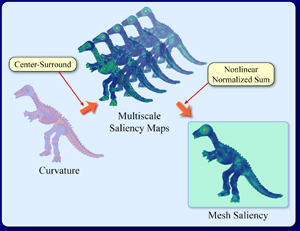

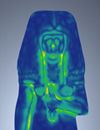

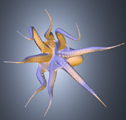

Research over the last decade has built a solid mathematical foundation for representation and analysis of 3D meshes in graphics and geometric modeling. Much of this work however does not explicitly incorporate models of low-level human visual attention. In this research we introduce the idea of mesh saliency as a measure of regional importance for graphics meshes. Our notion of saliency is inspired by low-level human visual system cues. We define mesh saliency in a scale-dependent manner using a center-surround operator on Gaussian-weighted mean curvatures. We observe that such a definition of mesh saliency is able to capture what most would classify as visually interesting regions on a mesh. The human-perception-inspired importance measure computed by our mesh saliency operator results in more visually pleasing results in processing and viewing of 3D meshes, compared to using a purely geometric measure of shape, such as curvature. We discuss how mesh saliency can be incorporated in graphics applications such as mesh simplification and viewpoint selection and present examples that show visually appealing results from using mesh saliency.

We have witnessed significant advances in the theory and practice of 3D graphics meshes over the last decade. Much of this work has focussed on using mathematical measures of shape, such as curvature. The rapid growth in the number and quality of graphics meshes and their ubiquitous use in a large number of humancentered visual computing applications, suggest the need for incorporating insights from human perception into mesh processing. Although excellent work has been done in incorporating principles of perception in managing level of detail for rendering meshes, there has been less attention paid to the use of perception-inspired metrics for processing of meshes.

Our goal in this research is to bring perception-based metrics to bear on the problem of processing and viewing 3D meshes. Purely geometric measures of shape such as curvature have a rich history of use in the mesh processing literature. However, a purely curvature-based metric may not necessarily be a good metric of perceptual importance. For example, a high-curvature spike in the middle of a largely flat region will be likely perceived to be important. However, it is also likely that a flat region in the middle of densely repeated high-curvature bumps will be perceived to be important as well. Repeated patterns, even if high in curvature, are visually monotonous. It is the unusual or unexpected that delights and interests. We introduce mesh saliency as a metric for measuring regional importance using center-surround mechanism in multiple scales.

In this research, we introduce the concept of mesh saliency, a measure of regional importance, for 3D meshes, and present a method to compute it. A number of tasks in graphics can benefit from a computational model of mesh saliency, and in this research we explore the application of mesh saliency to mesh simplification and view selection. The main contributions of this research are:

- Saliency Computation: There can be a number of definitions of saliency for meshes. We outline one such method for graphics meshes based on the Gaussian-weighted center-surround evaluation of surface curvatures. Our method has given us very promising results on several 3D meshes.

- Salient Simplification: We discuss how traditional mesh simpli fication methods can be modified to accommodate saliency in the simplification process. Our results show that saliencyguided simplification can easily preserve visually salient regions in meshes that conventional simplification methods typically do not.

- Salient Viewpoint Selection: As databases of 3D models evolve to very large collections, it becomes important to automatically select viewpoints that capture the most salient attributes of objects. We present a saliency-guided method for viewpoint selection that maximizes visible saliency.

Our mesh saliency computation approach is based on a center-surround operator, which is present in many models of human vision. We use this approach primarily because it is a straightforward way of finding regions that are unique relative to their surroundings. For this reason, it is plausible that mesh saliency may capture the regions of 3D models that humans will also find salient. Our experiments provide preliminary indications that this may be true.

We have shown the applicability of mesh saliency to mesh simplification and viewpoint selection. The application of our saliency models to guide simplification of meshes have given us very effective results. Our saliency-based simplification retains more triangles around salient regions than previsou methods. For example, the ears, nose, lips, and eyes of the human face model are better preserved. Our saliency-based method selects viewpoints that capture the most salient features of objects.

curvature-guided

viewpoint

saliency-guided

viewpoint

curvature-guided

viewpoint

saliency-guided

viewpoint

We have developed a model of mesh saliency using center-surround filters with Gaussian-weighted curvatures. We have shown how incorporating mesh saliency can visually enhance the results of several graphics tasks such as mesh simplification and viewpoint selection. For a number of examples we have shown in this research, one can see that our model of saliency is able to capture what most of us would classify as interesting regions in meshes. Not all such regions necessarily have high curvature. While we do not claim that our saliency measure is superior to mesh curvature in all respects, we believe that mesh saliency is a good start in merging perceptual criteria inspired by low-level human visual system cues with mathematical measures based on discrete differential geometry for graphics meshes.

- C. H. Lee, A. Varshney, and David Jacobs. Mesh Saliency. ACM SIGGRAPH 2005 (Accepted) (PDF 18.7 MB)

We would also like to acknowledge Stanford Graphics Lab and Cyberware Inc. for providing the models for generating the images in this project.

This material is based upon work supported by the National Science Foundation under grants IIS 00-81847, ITR 03-25867, CCF 04-29753, and CNS 04-03313. Any opinions, findings, and conclusions or recommendations expressed in this material are those of the author(s) and do not necessarily reflect the views of the National Science Foundation.

Web Accessibility