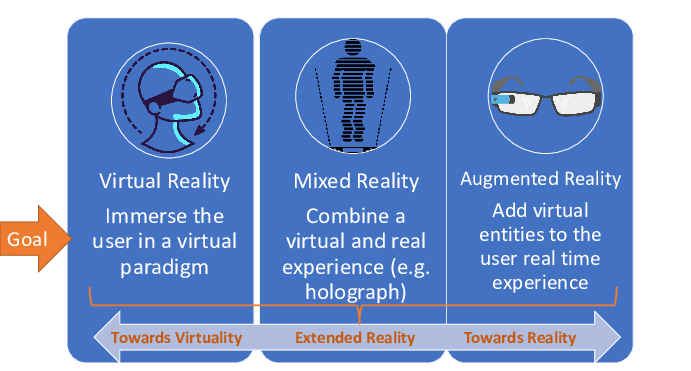

AR, VR, and MR, collectively referred to as XR, are becoming ubiquitous for human-computer interaction with limitless applications and potential use. This course examines advances on real-time multi-modal XR systems in which the user is 'immersed' in and interacts with a simulated 3D environment. The topics will include display, modeling, 3D graphics, haptics, audio, locomotion, animation, applications, immersion, and presence and how they interact to create convincing virtual environments. We'll explore these fields and

the current/future research directions.

By the end of the class, students will be understand a wide range of research problems in XR, as well as be able to understand the future of XR as a link between physical and virtual worlds as described in the "Metaverse" visions.

The class assignments will involve interacting with the above topics in the context of a real XR device, with the goal of building the skills to develop powerful multi-modal XR applications. Assignments may be completed in Unity or Unreal Engine 4, but the TAs can only provide technical support for Unity.

Prior knowledge or experience with game development or XR is not necessary to succeed in the course! Unity uses object-oriented programming, with the technical learning experience of this class focusing on APIs and application design rather than programming skill, so intermediate experience with Java, C#, C++, etc. is sufficient.

Students will have access to Oculus Quest VR headsets (see image below) for this course, which they will be expected to use to complete most of the assignments and final project. Details on the HMD rental system will be announced later.