18 Dynamic scheduling – Loop Based Example

Dr A. P. Shanthi

The objective of this module is to go through a clock level simulation for a sequence of instructions including branches that undergo dynamic scheduling. We will look at how the data structures maintain the relevant information and how the hazards are handled.

We will first of all do a recap of dynamic scheduling. Dynamic Scheduling is a technique in which the hardware rearranges the instruction execution to reduce the stalls, while maintaining data flow and exception behavior. The advantages of dynamic scheduling are:

- It handles cases when dependences are unknown at compile time

- – (e.g., because they may involve a memory reference)

- It simplifies the compiler

- It allows code compiled for one pipeline to run efficiently on a different pipeline

- Hardware speculation, a technique with significant performance advantages, builds on dynamic scheduling

In a dynamically scheduled pipeline, all instructions pass through the issue stage in order; however they can be stalled or bypass each other in the second stage and thus enter execution out of order. Instructions will also finish out-of-order.

In order to allow instructions to execute out-of-order, we need to do some changes to the decode stage. So far, we have assumed that during the decode stage, the MIPS pipeline decodes the instruction and also reads the operands from the register file. Now, with dynamic scheduling, we bring in a change to enable out-of-order execution. The decode stage or the issue stage only decodes the instructions and checks for structural hazards. If there is no structural hazard, i.e. a reservation station available, the instruction is issued. After that, the instructions will wait in the reservation stations until the dependent instructions produce data, and then read operands. This will enable us to do an in order issue, out of order execution and out of order completion.

The entire book keeping is done in hardware. The hardware considers a set of instructions called the instruction window and tries to reschedule the execution of these instructions according to the availability of operands. The hardware maintains the status of each instruction and decides when each of the instructions will move from one stage to another. The dynamic scheduler introduces register renaming in hardware and eliminates WAW and WAR hazards.

In the Tomasulo’s approach, the register renaming is provided by reservation stations (RSs). Associated with every functional unit, we have a few reservation stations. When an instruction is issued, a reservation station is allocated to it. The reservation station stores information about the instruction and buffers the operand values (when available). So, the reservation station fetches and buffers an operand as soon as it becomes available (not necessarily involving register file). This helps in avoiding WAR hazards. If an operand is not available, it stores information about the instruction that supplies the operand. The renaming is done through the mapping between the registers and the reservation stations. When a functional unit finishes its operation, the result is broadcast on a result bus, called the common data bus (CDB). This value is written to the appropriate register and also the reservation station waiting for that data. When two instructions are to modify the same register, only the last output updates the register file, thus handling WAW hazards. Thus, the register specifiers are renamed with the reservation stations, which may be more than the registers. For load and store operations, we use load and store buffers, which contain data and addresses, and act like reservation stations.

The three steps in a dynamic scheduler are listed below:

- Issue

- Get next instruction from FIFO queue

- If available RS, issue the instruction to the RS with operand values if available

- If a RS is not available, it becomes a structural hazard and the instruction stalls

- If an earlier instruction is not issued, then subsequent instructions cannot be issued

- If operand values are not available, the instructions wait for the operands to arrive on the CDBs

- Execute

- When operand becomes available, store it in any reservation station waiting for it

- When all operands are ready, issue the instruction for execution

- Loads and store are maintained in program order through the effective address

- No instruction allowed to initiate execution until all branches that proceed it in program order have completed

- Write result

- Write result on CDB into reservation stations and store buffers

- Stores must wait until address and value are received

- Write result on CDB into reservation stations and store buffers

The dynamic scheduler maintains three data structures – the reservation station, a register result data structure that keeps track of the instruction that will modify a register and an instruction status data structure. The third one is more for understanding purposes. The reservation station components are as shown below:

Name —Identifying the reservation station

Op—Operation to perform in the unit (e.g., + or –)

Vj, Vk—Value of Source operands

- – Store buffers have V field, result to be stored

Qj, Qk—Reservation stations producing source registers (value to be written)

- – Store buffers only have Qi for RS producing result

Busy—Indicates reservation station or FU is busy

Register result status—Indicates which functional unit will write each register, if one exists. It is blank when there are no pending instructions that will write that register. The instruction status gives the status of each instruction in the instruction window.

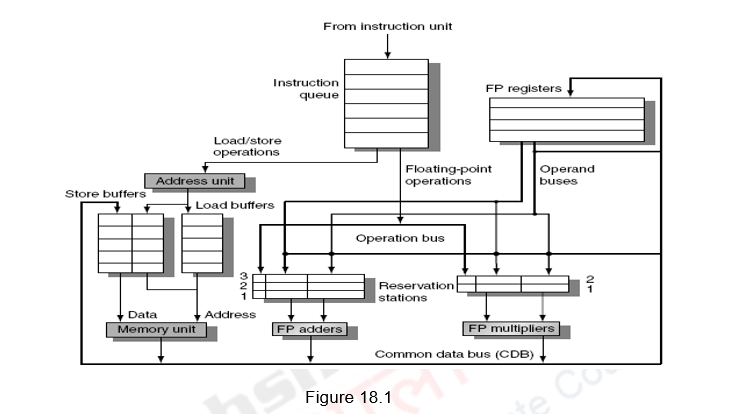

Figure 18.1 shows the organization of the Tomasulo’s dynamic scheduler. Instructions are taken from the instruction queue and issued. During the issue stage, the instruction is decoded and allocated an RS entry. The RS station also buffers the operands if available. Otherwise, the RS entry marks the pending RS value in the Q field. The results are passed through the CDB and go to the appropriate register, as dictated by the register result data structure, as well as the pending RSs.

Having looked at the basics of the Tomasulo’s dynamic scheduler, we shall now discuss how dynamic scheduling happens for a sequence of instructions. The previous module discussed a case where there were no branches. When we look at performing optimizations, and if we only consider the basic block, then, both the compiler as well as the hardware does not have too many options. A basic block is a straight-line code sequence with no branches in, except to the entry, and no branches out, except at the exit. With the average dynamic branch frequency of 15% to 25%, we normally have only 4 to 7 instructions executing between a pair of branches. Additionally, the instructions in the basic block are likely to depend on each other. So, the basic block does not offer too much scope for exploiting ILP. In order to obtain substantial performance enhancements, we must exploit ILP across multiple basic blocks. The simplest method to exploit parallelism is to explore loop-level parallelism, to exploit parallelism among iterations of a loop. Vector architectures are one way of handling loop level parallelism. Otherwise, we will have to look at either dynamic or static methods of exploiting loop level parallelism. One of the common methods is loop unrolling, dynamically via dynamic branch prediction by the hardware, or statically via by the compiler using static branch prediction.

This example with loops will illustrate how the hardware dynamically unrolls the loop using dynamic branch prediction. We also assume that instructions beyond the branch are fetched and issued based on the dynamic branch predictor’s prediction. However, these instructions beyond the branch are not executed till the branch is resolved. This is because of the fact that, if the branch prediction fails and we have already executed instructions and written into memory or the register file, it cannot be undone. That is, we are not speculatively executing instructions.

Let us consider the loop as shown below.

Loop: LD F0 0 R1

MULTD F4 F0 F2

SD F4 0 R1

SUBI R1 R1 #8

BNEZ R1 Loop

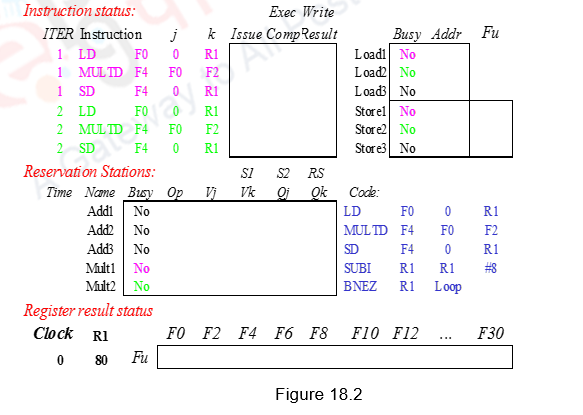

Figure 18.2 shows the sequence of instructions considered along with the three data structures maintained. We shall assume three Add reservation stations and two Mul reservation stations. There are also three load buffers and three store buffers. Assume that Add has a latency of 2, Multiply takes 4 clocks, the first load takes 8 clocks because of a L1 cache miss and the second load takes 1 clock (hit). We shall consider two iterations of the loop. R1 register is initialized to 80, so that the loop gets executed 10 times. For the simulation to be clear, we will show clocks for the integer instructions SUBI and BNEZ. However, in reality, these integer instructions will be ahead of the floating point Instructions.

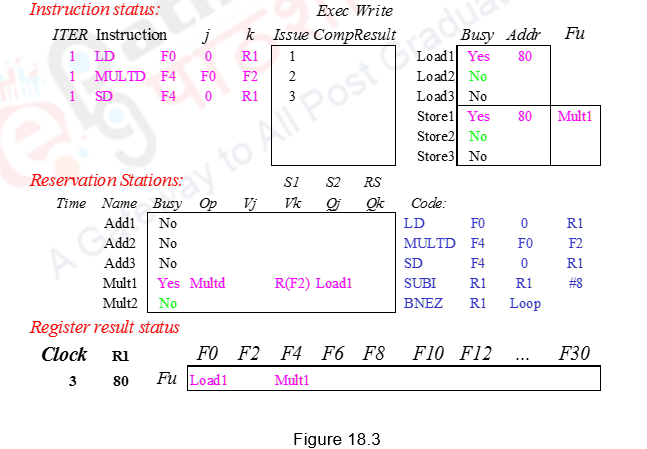

During the first clock cycle, Load1 is issued. A load buffer entry is allocated, its status is now busy and the contents of R1 needed for the effective address calculation and the value 0 is stored in the buffer. The register result status shows that register F0 is to be written by the instruction identified by Load buffer1.

During the second clock cycle, Load1 moves from the issue stage to the execution stage. The effective address (0+R1) is calculated (80) and stored in the Load buffer1. Meanwhile, instruction 2, i.e. Mul1 is issued. It is allocated Mult1 entry, the contents of register F2 are fetched and stored in the place of Vk. The other operand F0 cannot be fetched now and has to wait for Load1 to finish. This is indicated in the Qj field as Mult1. Mult1 reservation station is marked busy. The register result status shows that register F4 is to be written by the instruction identified by Mult1.

During the third clock cycle, Store1 is issued. The store buffer entry Store1 is allocated for this store. The values 0 and (R1) for the effective address calculation are stored there. Also, the data field indicates that Mult1 has to produce the data for Store1. Note that Load1 has a cache miss and has not performed the memory access. The Mul1 instruction needs data from the Load1 instruction and is also stalled. This scenario is indicated in Figure 18.3.

During the fourth clock cycle, the Sub instruction is issued to the integer unit. Store calculates the effective address (80) and stores in the Store1 entry. During the fifth clock cycle, the Branch instruction is issued to the integer unit.

During the sixth clock cycle, assuming that the branch predictor predicts the branch to be taken, dynamic unrolling of the loop is done and Load2 of the second iteration is issued to Load2 entry. This entry is marked busy. The register result status shows that register F0 is to be written by the instruction identified by Load2. Note that though we are using the same register F0 for both the load instructions, a WAW hazard does not happen because the later of the two loads is only writing into F0. Meanwhile, the Sub instruction finishes completion.

During the seventh clock cycle, the Mul instruction of the second iteration is issued. It is allocated Mult2 entry, the contents of register F2 are fetched and stored in Vk. The other operand F0 cannot be fetched now and has to wait for Load2 to finish. This is indicated in the Qj field as Load2. Mult2 reservation station is marked busy. The register result status shows that register F4 is to be written by the instruction identified by Mult2. This overwrites the fact that F4 has to modify register F4, avoiding WAW hazard. Observe that the renaming allows the register file to be completely detached from the computation and the first and second iterations are completely overlapped. The Sub instruction writes its result. The Branch is data dependent on Sub and has not yet executed. Because of this, the Load2 after Branch does not do execution.

During the eighth clock cycle, Store2 is issued. The store buffer entry Store2 is allocated for this store. Also, the data field indicates that Mult2 has to produce the data for Store2. Note that Load1 has not yet finished the memory access. The Mul instructions need data from the Load instructions and are also stalled. The Branch instruction is resolved in this clock cycle.

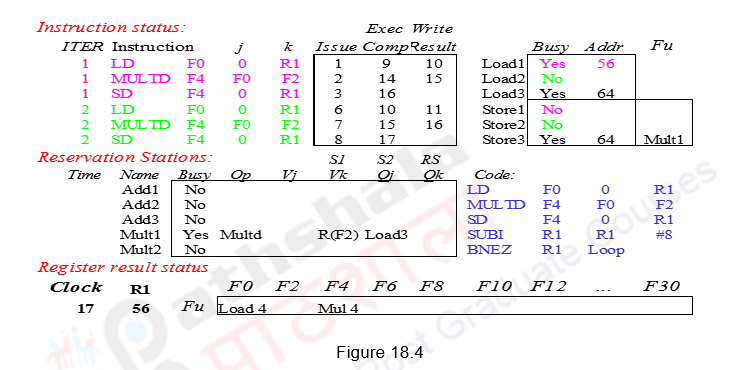

During the ninth clock cycle, the effective address calculation for Load2 and Store2 happens. Meanwhile, Load1 finishes execution (latency 7 – clock cycles 3-9) and the Sub of the second iteration is issued. The tenth clock cycle does the write of the Load1 result on the CDB also filling the Mult1 data. The second Branch instruction is also issued. The Load2 instruction also does a memory access here.

During the eleventh clock cycle, Mul1 starts executing and the Load2 writes the result on the CDB and hence the register F0. Sub2 finishes execution. Note that the initial loaded value from Load1 is never written into F0. Load2 has produced the data for Mult2 and so the Mul2 instruction is now ready for execution. Load3 is issued in this clock cycle.

During the twelfth clock cycle, Mul2 starts executing and Sub2 writes its result. The third Mul instruction cannot be issued as there is a structural hazard (no Mult reservation station). The second Branch gets resolved in clock cycle thirteen. The Mul1 instruction finishes in clock cycle fourteen and the Mul2 instruction finishes execution in clock cycle fifteen. Mul1 writes the result in fifteen and Mul2 writes the result in sixteen. Therefore, Store1 completes in sixteen and Store2 completes in seventeen. Only in the sixteenth clock cycle, Mul3 can be issued and the other instructions can be issued in subsequent clock cycles. The final schedule is shown in Figure 18.4.

Observe that there is an in-order issue, out-of-order execution and out-of-order completion. Due to the renaming process, multiple iterations use different physical destinations for the registers (dynamic loop unrolling). This is why the Tomasulo’s approach is able to overlap multiple iterations of the loop. The reservation stations permit instruction issue to advance past the integer control flow operations. They buffer the old values of registers, thus totally avoiding the WAR stall that would have happened otherwise. Thus we can say that the Tomasulo’s approach builds the data flow dependency graph on the fly. However, the performance improvement depends on the accuracy of the branch prediction. If the branch prediction goes wrong, the instructions fetched and issued from the wrong path will have to be discarded, leading to penalty.

The advantages of the Tomasulo’s dynamic scheduling approach are as follows:

- (1) The distribution of the hazard detection logic:

- – Tomasulo’s approach uses distributed reservation stations and the CDB

- – If multiple instructions are waiting on a single result, and each instruction has other operand, then instructions can be released simultaneously by broadcast on CDB

- – If a centralized register file were used, the units would have to read their results from the registers when register buses are available.

- (2) The elimination of stalls for WAW and WAR hazards

- – The buffering of the operands in the reservation stations, as soon as they become available, does the mapping between the registers and the reservation stations. This renaming process avoids WAR hazards. Even if a subsequent instruction modifies the register file, there is no problem because the operand has already been read and buffered.

- – When two or more instructions write into the same register, leading to WAW hazards, only the latest register information is maintained in the register result status. This renaming avoids WAW hazards.

However, this approach is not without drawbacks. The following are the drawbacks:

- Complexity

- o The hardware becomes complicated with the book keeping done and the CDB and the associative compares

- Many associative stores (CDB) at high speed

- Performance limited by Common Data Bus

- o Each CDB must go to multiple functional units. This leads to high capacitance and high wiring density

- o With only one CDB, the number of functional units that can complete per cycle is limited to one!

- Multiple CDBs will lead to more FU logic for parallel associative stores

- Non-precise interrupts!

- o The out-of-order execution will lead to non-precise interrupts. We will address this later when we discuss speculation.

We have not discussed dependences with respect to memory locations. They will also have to be handled properly. A load and a store can safely be done out of order, provided they access different addresses. If a load and a store access the same address, then either

- the load is before the store in program order and interchanging them results in a WAR hazard, or

- the store is before the load in program order and interchanging them results in a RAW hazard.

Similarly, interchanging two stores to the same address, results in a WAW hazard. Hence, to determine if a load can be executed at a given time, the processor can check whether any uncompleted store that precedes the load in program order shares the same data memory address as the load. Similarly, a store must wait until there are no unexecuted loads or stores that are earlier in program order and share the same data memory address.

To summarize, we have looked at an example for dynamic scheduling with branches, wherein the hardware does a dynamic reorganization of code at run time. Based on the branch prediction, branches are dynamically unrolled. Instructions beyond the branch are fetched and issued, but not executed. The book keeping done and the various steps carried out during every clock cycle have been elaborated.

| Web Links / Supporting Materials |

| Computer Organization and Design – The Hardware / Software Interface, David A. Patterson and John L. Hennessy, 4th Edition, Morgan Kaufmann, Elsevier, 2009. |

| Computer Architecture – A Quantitative Approach , John L. Hennessy and David A. Patterson, 5th Edition, Morgan Kaufmann, Elsevier, 2011. |