UMD Computer Scientists Create Unremovable Watermark to Protect Intellectual Property From Theft

Companies spend vast amounts of time and millions of dollars on the development of neural network models that are used in facial recognition, artificial intelligence (AI) art generators and other cutting-edge technologies. These pricey, proprietary models could be prime targets for theft in the future, which prompted a team of University of Maryland researchers to create an improved watermark to protect them.

Watermarks are a way for organizations to claim authorship of the models they create, akin to a painter signing their name in the corner of a painting. However, current watermarking methods are vulnerable to savvy adversaries who know how to tweak the network parameters in a way that would go unnoticed, allowing them to claim a model as their own. In comparison, the new watermark developed by the UMD team cannot be removed without making major changes that compromise the model itself. The team presented its watermark at the International Conference on Machine Learning in July 2022.

“We can prove mathematically that it’s not possible to remove the watermark by making small changes to the network parameters,” said one of the paper’s co-authors, Tom Goldstein. Goldstein is an associate professor of computer science and Pier Giorgio Perotto Endowed Professor at UMD. “It doesn't matter how clever you are—unless the change you make to the neural network parameters is very large, you cannot come up with a method for removing the watermark.”

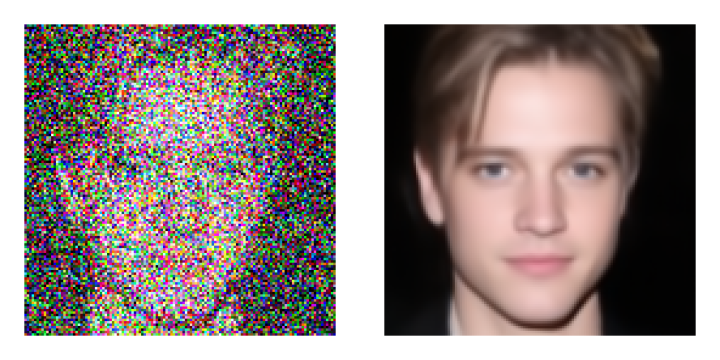

Watermarks typically refer to a signature or logo placed over an image to show ownership. But in this case, the watermarking process uses AI to assign unexpected labels to randomly generated “trigger images.” For example, the label “dog” could be assigned to an image of a cat.

Using this watermarking process makes it possible to detect when an adversary has stolen a model because those label and image pairings will also be duplicated. If 10 trigger images are used, the odds of the same pairings occurring by chance in two models are extremely slim—1 in 10 billion to be exact.

“A watermark is something you do to a neural network,” Goldstein explained. “It’s essentially specific secret behaviors that you embed in the neural network, and then if you want to check whether the neural network belongs to your organization, you observe whether it has those secret behaviors. Somebody who created their own model wouldn't have those behaviors by random chance.”

Goldstein said that, to his knowledge, no neural network model has ever been stolen. However, this technology is still in its infancy, and AI is expected to become increasingly common in commercial products in the coming years. With that comes the growing threat of theft, potentially leading to significant financial losses for a company.

“Because of the growth of AI, I think it’s likely that, down the road, we will see allegations of model theft being made,” Goldstein said. “However, I also think it’s very likely that companies will be savvy enough to employ some sort of watermarking technique to protect themselves against that.”

###

Their study, titled “Certified Neural Network Watermarks with Randomized Smoothing,” was led by computer science Ph.D. students Arpit Bansal and Ping-yeh Chiang (M.S. ‘22, computer science) and co-authored by Goldstein, computer science Ph.D. student Michael Curry and Computer Science Associate Professor John Dickerson. In addition, they collaborated with researchers from the Adobe Research lab located in the university’s Discovery District.

This research was supported by DARPA GARD, Adobe Research and the Office of Naval research. This article does not necessarily reflect the views of these organizations.

Written by Emily Nunez

The Department welcomes comments, suggestions and corrections. Send email to editor [-at-] cs [dot] umd [dot] edu.